AI Agent Governance: How to Run Scripts Safely

- Publised May, 2026

-

Duc Nguyen (Dwight)

AI agent skills help agents execute scripts, tools and workflows. Learn how to govern skill scripts safely with permissions, approvals and sandboxing.

Table of Contents

Toggle

Key Takeaways

Skill scripts execution allows agents to run deterministic operations (like Python or Bash scripts) without exhausting large language model (LLM) context windows.

Unmanaged script execution introduces significant security risks, making comprehensive agent governance an absolute necessity for modern enterprises.

To run scripts safely, organizations must adopt advanced scanning, cryptographic signing, sandboxing, and centralized skill registries.

Introduce

An AI agent skill can package instructions, metadata, templates, reference files, and scripts into a reusable unit. Instead of asking users to explain the same process each time, the enterprise can encode that process into a skill. The agent can then load the right skill when the task requires it.

For business teams, this creates scale. For technology and security teams, it creates risk.

Once an agent can run scripts from a skill, it is no longer just producing text. It can take action in a system environment. It may read files, process data, trigger commands, call internal tools, or write outputs. That moves AI agent skills into the domain of enterprise governance.

The core question is no longer: “Can the agent do the task?”

The better question is: “Can the agent do the task safely, with the right permissions, controls, and accountability?”

What Are AI Agent Skills?

AI agent skills are reusable capability modules that help an AI agent complete a defined type of task.

A comprehensive agent skill typically consists of three foundational layers:

Instructions: Procedural knowledge, best practices, and specialized workflows dictated by internal policies, often configured via YAML frontmatter or Markdown.

Resources: Reference materials such as database schemas, application programming interface (API) documentation, and operational templates that agents can retrieve on demand.

Executable Code: Automated scripts that allow the agent to interact deterministically with external systems, local file systems, or databases.

This modular architecture allows a single foundational model to perform specialized tasks—such as evaluating supplier risks, closing end-of-month financial books, or triaging IT incidents—by simply loading the appropriate skill package at runtime.

Why Skill Scripts Execution Matters

Skill scripts execution means an AI agent can run a script included in, or referenced by, an AI agent skill.

That script may perform simple tasks, such as cleaning a CSV file or formatting a document. It may also perform sensitive actions, such as calling an API, updating a database, moving files, or triggering a workflow.

This is where AI agent skills become powerful.

Scripts give agents access to deterministic execution. Instead of relying only on natural language generation, the agent can use code to complete repeatable tasks with more consistency.

This is useful because scripts reduce manual work. They also reduce the need for long, fragile prompts.

However, script execution introduces a control problem. If the agent can run a script, the enterprise must know what the script can access, what it can change, and what happens if the output is wrong.

My position is simple: enterprises should allow script execution only when the execution environment is governed by design.

The Core Risks of Running Scripts From AI Agent Skills

Running scripts from AI agent skills creates value, but it also expands the enterprise risk surface. The key risks include:

- Malicious or compromised skills

A skill may contain unsafe instructions, hidden logic, or harmful scripts that access files, call unknown endpoints, or manipulate agent behavior. - Over-privileged execution

A skill script may receive more access than it needs, such as broad file access, network access, or system-level permissions. - Unexpected code execution

The agent may run the wrong command, pass unsafe inputs, or trigger a script in the wrong context, even without malicious intent. - Weak isolation

If scripts run too close to enterprise files, credentials, or internal systems, a small error can create a larger security incident. - Uncontrolled data access

A script may read sensitive, regulated, or confidential data that is not required for the task. - No approval for sensitive actions

Scripts that write data, call production APIs, or change business records can create risk if they run without human review. - Poor auditability

Without structured logs, teams cannot trace which skill ran, what data it accessed, what changed, or who approved the action. - Skill sprawl

When teams create or reuse skills without a central registry, the enterprise loses visibility into what agents can execute.

How to Run Scripts From Agent Skills Safely

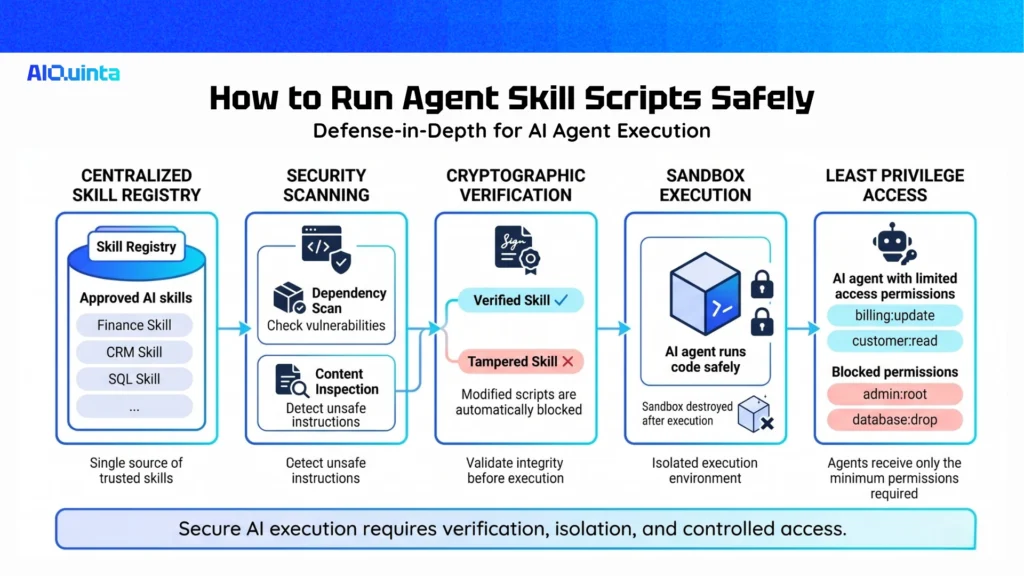

For IT leaders, security architects, and enterprise AI solution providers, mitigating the risks of autonomous execution is the highest priority. If you want to know how to run scripts from agent skills safely, you must implement a multi-layered defense-in-depth strategy. Here is the definitive framework for securing skill scripts execution in the enterprise.

Implement a Centralized Agent Skills Registry

Just as developers use artifact repositories to manage software dependencies, AI teams must utilize an Agent Skills Registry. A registry acts as a single, governed source of truth for all organizational skills. Before any agent can load a skill, that skill must be published, verified, and hosted within the central repository. This entirely eliminates the risk of agents pulling unverified or malicious open-source skills directly from the public internet. By adopting repository standards, organizations benefit from rich metadata extraction, comprehensive APIs, and strict version control.

Enforce Advanced Scanning and Integrity Checks

Security must begin before the script is ever executed. When a new skill is published to the registry, it should undergo a rigorous two-stage scanning process:

Dependency Scanning: The system must evaluate the script’s dependencies and binaries for known vulnerabilities (CVEs) and malicious code patterns.

Content Inspection: Ensure that the embedded instructions and reference data do not inadvertently instruct the agent to bypass security protocols.

Generate Cryptographic Provenance

To guarantee the integrity of a skill from creation to execution, implement cryptographic signing. If a skill passes the scanning phase, a cryptographically signed provenance evidence entity should be generated and stored alongside the skill. At runtime, the agent execution platform validates this signature. If the script or the skill’s instructions have been tampered with since they were approved, the execution is automatically blocked.

Utilize Strict Sandboxing Environments

Never allow an agent to execute scripts directly on a host machine’s root file system without boundaries. Scripts should be executed within ephemeral, tightly controlled sandbox environments (such as isolated containers). These environments must have restricted network access—capable of reaching only the specific internal APIs or databases required for the task. Once the script completes its deterministic operation and returns the output to the agent, the sandbox should be immediately destroyed.

Apply the Principle of Least Privilege

An AI agent should never operate with blanket administrative privileges. Implement granular Role-Based Access Control (RBAC) specifically tailored for agentic execution. If a customer service agent is executing a script to process a refund, that specific execution thread should only have the database permissions necessary to alter the billing ledger for that exact customer, and nothing more.

Recommended Enterprise Architecture

A safe AI agent skills architecture should include five core layers.

1. Skill Registry

The skill registry stores approved skills, owners, versions, metadata, and risk levels. It answers the question: “What skills are allowed in the enterprise?”

2. Policy Engine

The policy engine checks whether a specific user, agent, skill, script, and action are allowed. It answers the question: “Should this execution be permitted?”

3. Execution Sandbox

The sandbox runs scripts in a controlled environment. It answers the question: “Can this script run without exposing the broader system?”

4. Approval Layer

The approval layer pauses sensitive actions for human review. It answers the question: “Does this task need accountable human consent?”

5. Observability Layer

The observability layer captures logs, events, outputs, errors, and policy decisions. It answers the question: “Can we audit what happened?”

Together, these layers create a practical governance model for safe script execution.

Safe Script Execution Checklist

Before allowing AI agent skills to run scripts in production, check the following:

- The skill is registered in the central inventory.

- The skill has a business owner.

- The skill has a technical owner.

- The script source has been reviewed.

- The skill version is pinned.

- The script runs in a sandbox.

- File access is scoped.

- Network access is blocked by default.

- Secrets are not exposed by default.

- Commands are allowlisted.

- Inputs are validated with schemas.

- Outputs are captured.

- High-risk actions require approval.

- Logs are structured and searchable.

- Failed executions are monitored.

- Unsafe versions can be rolled back.

This checklist should become part of the enterprise AI release process.

Conclusion

AI agent skills are one of the most important patterns in enterprise AI because they turn agent behavior into reusable operating logic.

But this power changes the governance baseline.

When a skill includes script execution, the enterprise must stop thinking in terms of prompts alone. It must think in terms of runtime control, permission design, software supply chain, auditability, and operational risk.

My recommendation is direct: do not block script execution, but do not allow uncontrolled execution either.

The winning model is governed execution.

Use a central skill registry. Scope every permission. Run scripts in sandboxes. Enforce allowlists. Validate inputs. Require approval for sensitive actions. Capture structured logs.

That is how enterprises can use AI agent skills to move faster while keeping control where it belongs.

FAQs

What is skill scripts execution?

Skill scripts execution means an AI agent can run a script from a skill to process data, call tools, generate outputs, or complete workflow steps.

How do you run scripts from agent skills safely?

Run scripts in a sandbox, apply least privilege, validate inputs, use allowlisted tools, restrict network access, require approval for sensitive actions, and log every execution.

Why are AI agent skills a governance risk?

They can give agents access to scripts, tools, files, APIs, and business systems. Without governance, skills may create security, compliance, or operational risk.

Your Knowledge, Your Agents, Your Control