AI Agent Governance: Skill Permissions & Allowlist Tools

- Publised May, 2026

-

Duc Nguyen (Dwight)

Master AI agent governance with strict skill permissions. Secure workflows using allowlist tools for agent skills.

Table of Contents

Toggle

Key Takeaways

Transition to Autonomous Execution: Understand why modern AI agent security has shifted from simple prompt injection defense to robust execution governance and runtime boundaries.

Mitigate the “Double Agent” Risk: Learn how to prevent over-privileged agents from being manipulated by untrusted external content into executing malicious actions.

Enforce Strict Skill Permissions: Discover how to apply the principle of least privilege, per-skill namespacing, and declarative permission manifests to your AI workflows.

Deploy Allowlist Tools: Master the implementation of allowlist tools for agent skills to control exactly which databases, APIs, and file systems your AI can access.

Introduce

The evolution of artificial intelligence has moved rapidly from passive, stateless chat interfaces to highly autonomous AI agents capable of planning, reasoning, and executing complex tasks across live enterprise systems. As these agents transition from assistants to operators, the security paradigm must shift. Relying on simple prompt engineering or basic Identity and Access Management (IAM) is no longer sufficient.

Welcome to the era of Agent Governance. Today, the most critical question an engineering leader must answer is not “How smart is our AI?” but rather “What are the exact execution boundaries of our AI?” Managing AI agent skills, correctly configuring skill permissions and allowed tools, and properly utilizing allowlist tools for agent skills are the foundational pillars of securing modern AI architectures.

This comprehensive guide delves into the mechanisms of agent governance, providing technical strategies to secure your AI workflows while maintaining high operational throughput.

The Evolution of AI Agent Governance

AI agent security became an entirely different problem space the moment models stopped merely generating text and started reading private databases, deciding what information mattered, calling external tools, and taking independent actions.

From Passive Assistants to Autonomous Executors

Large Language Models (LLMs) initially served as foundational generators. AI assistants added memory and context to these models, allowing for more cohesive user interactions. However, the true leap occurred with the advent of AI agents. Agents do not wait for granular human instructions; they act. They define sub-tasks, access tool integrations, and manipulate environments.

When an agent executes an operation—such as querying a production database, drafting and sending an email, or merging code—it crosses the boundary from a passive advisor to an active participant in your IT infrastructure. This shift necessitates Agent Governance: the framework of policies, logging, and architectural controls that restrict an AI’s operational scope.

The "Double Agent" Risk and The Lethal Trifecta

Zero Trust for AI guidance explicitly names a growing enterprise threat: the “double agent.” An overprivileged, manipulated, or misaligned AI agent operating inside an enterprise network can be hijacked by malicious external inputs.

This vulnerability is best understood through the “Lethal Trifecta” of agent risk:

Access to Private Data: The agent has authorization to read internal emails, proprietary code, or customer databases.

Exposure to Untrusted Content: The agent processes external, unverified inputs (e.g., reading an untrusted issue ticket, browsing the web, or scanning inbound emails).

External Communication: The agent has the ability to send data outward (via APIs, emails, or pull requests).

When an AI agent possesses all three capabilities without strict governance, a simple prompt injection attack hidden within an external email or issue ticket can instruct the agent to exfiltrate private data to an attacker-controlled server. Preventing this requires hard constraints on what skills the agent can use and what tools it is allowed to call.

What Are AI Agent Skills?

An AI agent skill is a specialized, functional capability that enables the agent to perform specific tasks outside of its neural network. Think of skills as plug-and-play modules or APIs that bridge the gap between language generation and real-world execution.

Common examples of AI agent skills include:

Data Processing: Reading, editing, or extracting tables from PDFs and

.xlsxfiles.System Interaction: Executing SQL queries, running Python code in a sandbox, or interfacing with AWS infrastructure.

Communication: Drafting and sending messages via Slack, Teams, or email gateways.

Why Managing Skill Permissions is Critical

If an agent is given a broad “database access” skill, it might use that skill to read sensitive user data when processing a seemingly innocuous request. The challenge is that an IAM role can dictate who is allowed to access the database, but it cannot evaluate if the agent’s current autonomous plan matches the organization’s intent. Therefore, managing skill permissions and allowed tools acts as the last line of defense. By governing the skills themselves, you ensure the agent can only take actions that are explicitly predefined, audited, and contextually appropriate.

Deep Dive into AI Agent Skill Permissions

Securing an agentic workflow is not about limiting the model’s intelligence; it is about constraining its physical capabilities. Just as you wouldn’t give a junior developer root access to a production database on their first day, you should not give an AI agent unbounded access to your APIs.

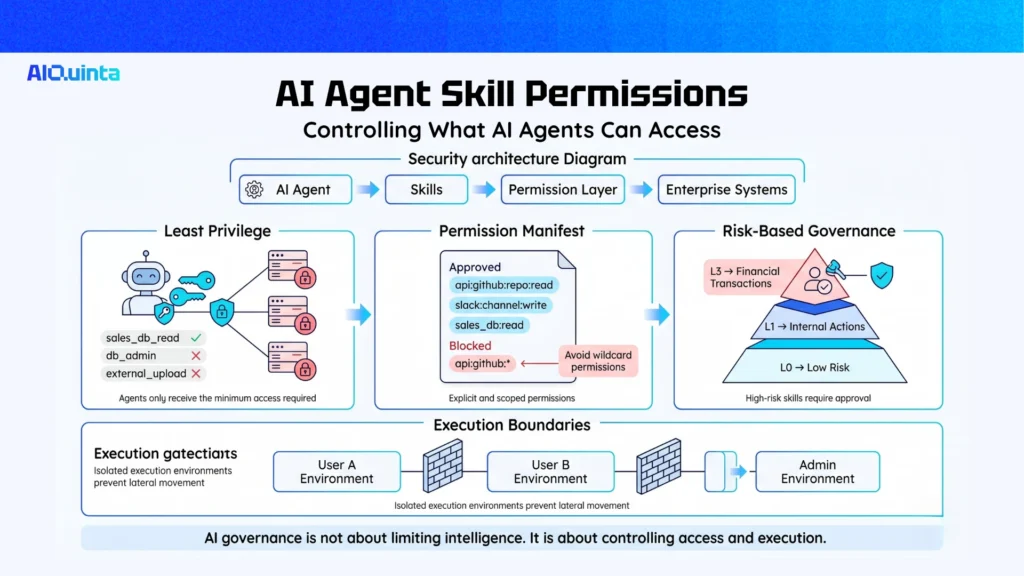

The Principle of Least Privilege

The foundational rule of agent governance is the principle of least privilege. An AI agent’s skill permissions should be limited to the absolute minimum scope required to accomplish its specific business objective.

If an agent’s job is to read daily sales data and write a summary report, it should only possess a sales_db_read skill, not a db_write or db_admin skill. Furthermore, the environment should restrict its external network access so it cannot transmit the summary report anywhere except the designated internal Slack channel.

Scoped Permissions and Declarative Manifests

According to the OWASP Agentic Skills Top 10 checklist, every AI agent skill must declare a permission manifest. This manifest is a declarative configuration file that explicitly lists the scoped permissions the skill requires.

Key Evidence to Look For in a Manifest:

Explicit Enumeration: Permissions are strictly listed (e.g.,

api:github:repo:read), not open-ended (e.g.,api:github:*).Per-Skill Namespacing: The skill is isolated from other skills and agents. Process-level isolation ensures there is no shared memory or unregulated filesystem access between skills.

Risk Tier Classification: Skills are assigned risk tiers (e.g., L0 for basic math calculations, L3 for external financial transactions), and higher-tier skills require stricter governance or human-in-the-loop approvals.

User vs. Agent Execution Boundaries

A critical architectural decision is splitting trust boundaries. A single integration gateway should be treated as one trusted operator boundary. If you run one gateway for mutually untrusted users or combine high-privilege and low-privilege tasks in the same runtime, a compromised agent can laterally move across user profiles.

Best practices dictate deploying separate gateways or utilizing separate host environments when mixed-trust use is unavoidable. The execution boundary must clearly delineate what the agent is doing autonomously versus what requires an explicit cryptographic signature or human approval.

Implementing Allowlist Tools for Agent Skills

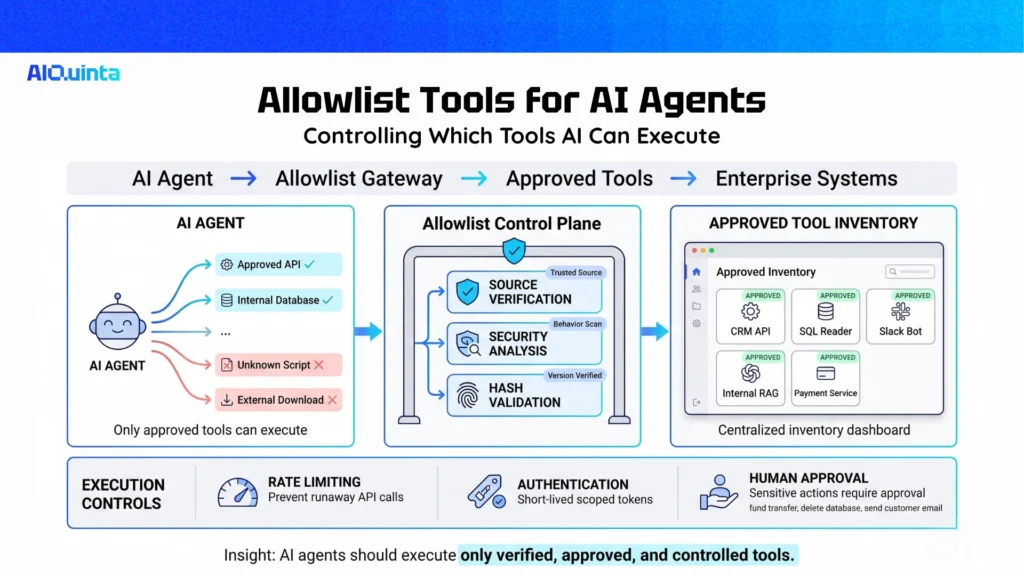

One of the most effective governance mechanisms is moving away from a “blacklist” approach (trying to block bad tools) to a strict “allowlist” architecture.

What Are Allowlist Tools?

Allowlist tools for agent skills are highly curated, pre-approved software utilities, APIs, and libraries that an AI agent is explicitly permitted to invoke. If an agent attempts to call a tool or access an endpoint that is not on the allowlist, the execution is automatically blocked by the runtime middleware (e.g., an Agentic Control Plane or gateway).

By utilizing an allowlist, you eliminate the risk of an agent hallucinating a tool call to an unknown endpoint, or being manipulated into downloading unverified code from the internet.

How to Build a Secure Skill Inventory

To successfully implement allowlist tools, enterprise organizations should establish a Centralized Skill Inventory. Every skill the agent can use must be registered here.

Criteria for Allowlisting a Tool:

Source Verification: The skill must be obtained from a verified, trusted source (e.g., an internal repository or a certified marketplace). Security teams must confirm publisher identity to avoid typosquatting attacks.

Behavioral Security Analysis: The tool must pass an automated scan that evaluates intent and code signatures to ensure it doesn’t contain hidden backdoors.

Cryptographic Hashing: The specific version of the tool should be hashed. The agent is only permitted to execute the tool if the runtime hash matches the allowlist hash, ensuring tamper-evident execution.

Best Practices for Tool Integration

When mapping out your allowed tools, you must enforce execution constraints at the integration layer:

Rate Limiting: WebSocket connections and API calls made by the agent must be rate-limited. This prevents run-away loops where a confused agent calls a paid API 10,000 times in a minute.

Authentication: The agent should authenticate its tool calls using scoped, short-lived tokens. Avoid hardcoding long-lived Channel Access Tokens or API keys directly into the agent’s prompt context.

Human-in-the-Loop (HITL) Gateways: For highly sensitive tools on the allowlist (e.g., transferring funds, dropping a database table, emailing customers), the governance middleware should pause execution and route a request to a human operator for final authorization.

Security Frameworks for Agentic Workflows

Aligning with NIST and OWASP Guidelines

The NIST (National Institute of Standards and Technology) initiatives on securing AI systems emphasize that AI risks now mimic ordinary software security issues, but are complicated by the non-deterministic nature of LLMs. NIST advises that when model outputs are fused with software functionality that changes external states (tools), strict runtime monitoring must be enforced.

Similarly, the OWASP Agentic Skills Top 10 provides an invaluable checklist for evaluating AI agent skills. Following OWASP recommendations ensures that you are actively checking for minimized permissions, isolated execution environments, and robust audit logging for every tool interaction.

Zero Trust Architecture for AI Agents

Zero Trust guidelines advocate for extending traditional Zero Trust principles (“Never trust, always verify”) to the AI lifecycle. In practice, this means treating the AI agent itself as an untrusted entity.

Verify Explicitly: Every tool call the agent makes must be authenticated and authorized against its current policy manifest.

Use Least Privileged Access: Limit the agent’s reach using allowlists and Just-In-Time (JIT) permissions.

Assume Breach: Architect the system with the assumption that the agent will eventually ingest a malicious prompt. By strictly controlling its output tools and network access, you contain the blast radius of a successful manipulation.

Conclusion

The transition toward autonomous engineering requires a foundational shift in how we approach software security. AI agents are no longer just chat interfaces; they are powerful operators interacting dynamically with your databases, code repositories, and external networks.

Implementing robust Agent Governance is not optional. By rigorously managing skill permissions and allowed tools, prioritizing the principle of least privilege, and deploying strict allowlist tools for agent skills, organizations can safely harness the immense productivity benefits of autonomous AI. Protect your enterprise from the “double agent” threat by ensuring your AI operates strictly within the secure, verifiable boundaries you define.

FAQs

What are skill permissions?

Skill permissions define what a skill can access and do. They may control data access, file access, tool use, script execution, network access, and approval requirements.

What does “allowed tools” mean in agent skills?

Allowed tools are the tools a skill is pre-approved to use. For example, a document review skill may be allowed to use Read and Search, but not Bash, Email, or external APIs.

Are skill instructions enough for security?

No. Skill instructions guide the agent, but they should not be the only control. Enterprises also need system-level permissions, tool allowlists, sandboxing, approval workflows, logging, and regular review.

Your Knowledge, Your Agents, Your Control